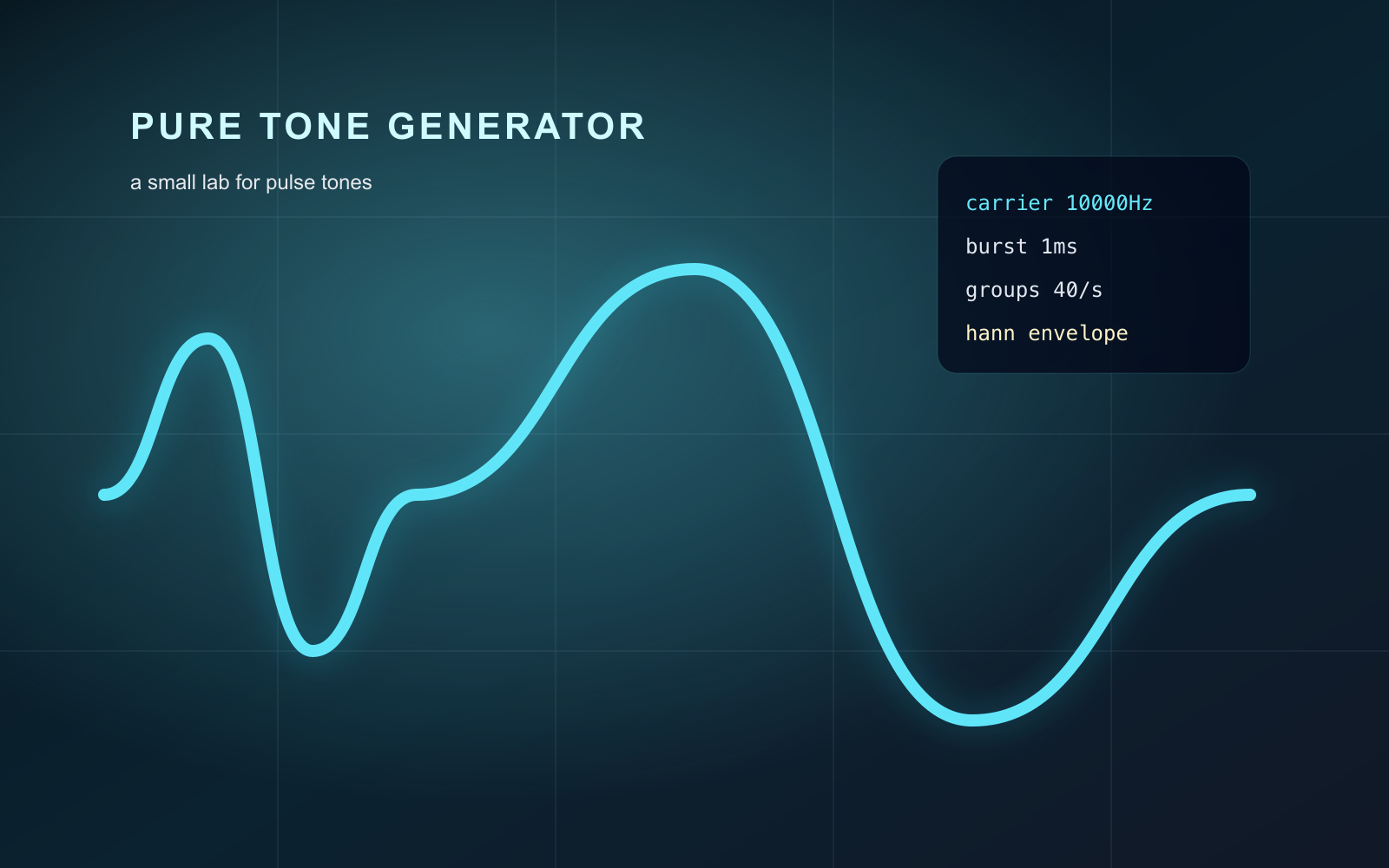

The Pure Tone Generator is a small browser lab for creating pulse tones, previewing their waveform, playing them at low volume, and exporting a WAV file for sound and signal experiments.

The beginning of this experiment wasn’t because I suddenly became interested in sound.

It started when a psychology professor approached me one day and asked if I could help build a small tool.

At the time, we had been discussing a research direction related to 40Hz stimulation. In recent years, several studies have explored whether specific frequencies of light and sound stimulation could influence gamma oscillations in the brain, and even show some relationship to Alzheimer’s-related pathology. The professor wanted a more intuitive way to generate and observe different forms of pulse tones, so he asked if I could create a prototype that would allow the lab to adjust parameters, play sounds, and visualize waveforms.

At first, I treated it as a very straightforward request.

In my mind, I thought:

«“It’s probably just an audio generator.”»

So the first version was extremely simple. A few sliders, a play button, and a canvas. As long as it could adjust frequency, burst duration, and groups per second—and successfully produce sound—I originally thought that was more or less enough.

But once I actually started building it, I realized the problem was far less simple than I had imagined.

Because the moment I began adjusting those parameters myself, I suddenly noticed something strange:

«I actually had no idea what “one millisecond” sounded like.»

It’s a very strange question.

Normally, we think of time as something obvious and intuitive. One second, one minute, one hour—those are easy to understand. But once the scale shrinks down to milliseconds, human intuition starts breaking apart surprisingly fast. Especially when a sound is only allowed to exist for a few milliseconds before disappearing immediately afterward, it stops behaving like what we traditionally think of as “sound.”

Under some parameters, it feels like pitch.

At other times, it feels more like a click.

And in certain moments, it feels less like sound altogether and more like some kind of signal.

That was the moment I started realizing:

I might not just be building a tool.

I was actually trying to understand time itself.

And so, what originally began as a simple implementation task slowly pulled me deeper and deeper into the subject.

I started revisiting the fundamentals of sound synthesis. Relearning sine waves, carrier frequencies, burst windows, and envelopes. Concepts I had technically learned before—but never truly felt—suddenly became tangible.

A pure sine wave is relatively easy to understand. It looks like a clean, stable curve oscillating smoothly up and down. But pulse tones are completely different. They feel more like fragmented pieces of sound: a carrier wave is only allowed to exist briefly, followed by silence, before reappearing again at a fixed rhythm.

And the truly fascinating part was hidden inside that cycle of:

«appear → disappear → reappear»

I began constantly adjusting the burst duration.

When the burst was long, the sound felt more like a stable pitch. But as the burst became shorter—down to only a few milliseconds—the entire perception started changing. That was the first time I genuinely felt that the human brain perceives time less like a continuous stream and more like an event detector.

The sound stopped being “just sound.”

It became a form of stimulation.

A rhythm.

A signal entering the nervous system.

Eventually, I found myself no longer satisfied with simply listening to these sounds.

Because I started wondering:

«Was what I thought I was hearing actually consistent with the waveform being generated by the program?»

So the canvas slowly stopped being just UI and became an observation tool.

I added Full View, One Cycle View, and Burst View because I realized the exact same signal looked almost like entirely different things depending on the time scale. In Full View, the pulse resembled a rhythmic arrangement. In One Cycle View, the ratio between sound and silence suddenly became extremely clear. And Burst View zoomed all the way into the carrier wave itself.

I still remember the first time I truly saw a 10000 Hz waveform rendered on screen.

An abstract number suddenly became a real, visible curve.

And in that moment, I suddenly understood something:

Sound itself is not really “sound.”

It is simply the shape left behind after air and time have been sliced into patterns.

Later, I gradually added RMS, Peak, DC Offset, and eventually WAV export. Because I started feeling that if this prototype was going to become a reliable experimental tool, it couldn’t merely “produce sound.” It also needed to clearly describe what it was actually generating.

The addition of WAV download came from the same idea. I didn’t want this experiment to exist only inside a browser. Once the signal could be exported, it could be analyzed, edited, layered, or integrated into entirely different research workflows.

By the time I finally delivered the project, I realized the whole thing had become something completely different from what it originally was.

At first, it was just a small tool commissioned by a psychology professor.

But somewhere along the way, it turned into my own exploration of time, perception, and sound.

And it was also the first time I truly realized:

Some things can only be understood after you build them with your own hands.

No. It is a sound and signal experiment for learning and creative coding, not a medical or therapeutic tool.

You can adjust duration, groups per second, burst length, carrier frequency, envelope shape, and playback volume.